Data analysis questions and answers pdf Blue Rocks

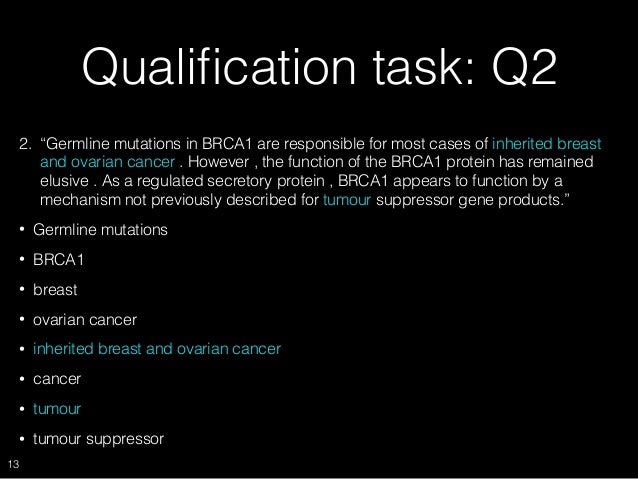

Statistical Data Analysis MCQs Quiz Questions Answers Data analysis is the process of systematically applying statistical and/or logical techniques to describe and illustrate, condense and recap and evaluate data.

Free Online data analysis sample questions Practice and

Effective Experiment Design and Data Analysis in. Final Exam Practice Questions Categorical Data Analysis 1. The estimated regression coefficients and associated standard errors for a multiple logistic regression model are provided in Table 1 using data from a prospective, This is a multi-response variable (you can give more than one response). Unfortunately it violates a critical assumption in statistical analysis, the independence of responses..

Data preparation is perhaps the most important step in any type of serious data analysis. And while it would be ludicrous to attempt to cover such a broad field of knowledge in one article, we’ve prepared a quick checklist that you can run through when preparing data for analysis. In a structured survey with numbered questions, the flat file has a column for each question, and a row for each respondent, a convention common to almost all standard statistical packages. If the data form a perfect rectangular grid with a number in every cell, analysis is made relatively easy, but there are many reasons why this will not always be the case and flat file data will be

Data Analysis Questions And Answers Pdf using methodical strategies to uncover answers in your data. important to have a specific question in mind when you begin data analysis so. We are offering the R interview questions and answers to help you perform better in your R job interview by listing the most probable questions asked. This interview questions section includes topics on how to communicate data analysis results using R, difference between library and require functions, function for adding datasets, R data

Question 3: What is the total number of adult males in the colony (excluding the children)? (a) 3496 (b) 3490 (c) 3500 (d) 3504 (e) None. Question 4: What is the total number of females in the colony? tween questions, answers and statistics seems to me to be something which us keep in mind the fact that the latter involves an analysis or a statistical model, and that there may be as many answers to this question as there are analyses or models? Surely much of the blame for such thinking rests with us, the teachers of statistics, who never fail to popularize the rigid formalism of Neyman

Data Analysis Interview Questions And Answers Pdf TABLEAU INTERVIEW QUESTIONS & ANSWERS PDF / DATA ANALYST/TABLEAU DESKTOP : SET 1. SET-1 (TOP 40 TABLEAU INTERVIEW QUESTIONS). This article expands on this central question by offering 10 additional key questions that can help to provide an even more complete story when analyzing data. The table in figure 1 summaries these 10 questions with an interpretation for each.

To answer these questions, we analysewhat participants report about their participation in liberal adult d education courses, about their experiences in liberal adult education courses and about the impact that participation has on their lives. We want to know how participation in liberal adult education affects and changes participants’ attitudes, self-concepts, learning biographies, and Statistical data analysis MCQs, statistical data analysis quiz answers, learn data analytics online courses. Statistical data analysis multiple choice questions and answers pdf on types of statistical methods, statistical analysis methods, data types in stats, sources of data, statistical techniques for online histogram courses distance learning.

Data Analysis And Interpretation Questions And Answers Data interpretation questions and answers aptitude, data interpretation questions and answers with explanation for interview, competitive examination and entrance This article expands on this central question by offering 10 additional key questions that can help to provide an even more complete story when analyzing data. The table in figure 1 summaries these 10 questions with an interpretation for each.

This is the generic data analysis process that we have explained in this answer, however, the answer to your question might slightly change based on the kind of data problem and the tools available at … Data analysis is the process of systematically applying statistical and/or logical techniques to describe and illustrate, condense and recap and evaluate data.

22/09/2017В В· Interview for Data Analysis.What is data analysis?What you know about interquartile range as data analyst?Do you know what is data analysis?What is long-term outcome in data analysis?What is We are offering the R interview questions and answers to help you perform better in your R job interview by listing the most probable questions asked. This interview questions section includes topics on how to communicate data analysis results using R, difference between library and require functions, function for adding datasets, R data

21/06/2017В В· 8 Tips for Asking The Right Data Analysis Questions Here at datapine we have helped solve hundreds of data analysis problems for our clients. All of our experience has taught us that data analysis is only as good as the questions you ask. Data analysis is the process of systematically applying statistical and/or logical techniques to describe and illustrate, condense and recap and evaluate data.

Of these 24 questions,16 will be on data analysis and 8 will be on recursion and financial modelling. All questions will be compulsory. Section A will be worth a total of 24 marks. Section B. will consist of eight multiple-choice questions on each of the four modules in Unit 4. Students must answer questions on . two. modules. Section B will be worth a total of 16 marks. The total marks for Students are required to respond to multiple-choice questions covering the Data analysis core area of study and three selected modules from the Applications area of study in relation to Outcomes 1 and 3. Structure and format The examination will consist of multiple-choice questions on the core and each of the six application modules. Students will be required to answer all questions on the

Data Analysis Questions And Answers Pdf

Statistical Data Analysis MCQs Quiz Questions Answers. 21/06/2017 · 8 Tips for Asking The Right Data Analysis Questions Here at datapine we have helped solve hundreds of data analysis problems for our clients. All of our experience has taught us that data analysis is only as good as the questions you ask., Final Exam Practice Questions Categorical Data Analysis 1. The estimated regression coefficients and associated standard errors for a multiple logistic regression model are provided in Table 1 using data from a prospective.

Free Online Data Analysis Test Questions Practice and. 22/09/2017В В· Interview for Data Analysis.What is data analysis?What you know about interquartile range as data analyst?Do you know what is data analysis?What is long-term outcome in data analysis?What is, This article expands on this central question by offering 10 additional key questions that can help to provide an even more complete story when analyzing data. The table in figure 1 summaries these 10 questions with an interpretation for each..

Statistical Data Analysis MCQs Quiz Questions Answers

Data Analysis And Interpretation Questions And Answers PDF. 100 High Level Data Interpretation Questions & Answers PDF. Welcome to the www.letsstudytogether.co online learning section. As we know now SBI/IBPS has conducted its mains examination keeping the name of the Quantitative Aptitude Section as Data Analysis & Interpretation. Data analysis is the process of systematically applying statistical and/or logical techniques to describe and illustrate, condense and recap and evaluate data..

This is the generic data analysis process that we have explained in this answer, however, the answer to your question might slightly change based on the kind of data problem and the tools available at … Online Data Analysis Sample Questions Practice and Preparation Tests cover Data Analysis Test 1, NTSE/CBSE (Science) - 16, NTSE/CBSE (Science) - 15, Science (Class - For full functionality of this site it is necessary to enable JavaScript.

22/09/2017 · Interview for Data Analysis.What is data analysis?What you know about interquartile range as data analyst?Do you know what is data analysis?What is long-term outcome in data analysis?What is This is the generic data analysis process that we have explained in this answer, however, the answer to your question might slightly change based on the kind of data problem and the tools available at …

Data Analysis And Interpretation Questions And Answers Data interpretation questions and answers aptitude, data interpretation questions and answers with explanation for interview, competitive examination and entrance Data preparation is perhaps the most important step in any type of serious data analysis. And while it would be ludicrous to attempt to cover such a broad field of knowledge in one article, we’ve prepared a quick checklist that you can run through when preparing data for analysis.

select the appropriate data for answer-ing a question. 2. Get a general picture of the information before reading the question. Read the given titles carefully and try to under-stand its nature. 3. Avoid lengthy calculations generally, data interpretation questions do not require to do extensive calculations and computa- tions. Most questions simply require read-ing the data correctly and 22/09/2017В В· Interview for Data Analysis.What is data analysis?What you know about interquartile range as data analyst?Do you know what is data analysis?What is long-term outcome in data analysis?What is

Econometric Analysis of Panel Data Spring 2007 – Tuesday, Thursday: 1:00 – 2:20 Professor William Greene Midterm Examination This examination has four parts. Weights applied to the four parts will be 15, 15, 30 and 40. This is an open book exam. You may use any source of information that you have with you. You may not phone or text message or email or Bluetooth (is that a verb?) to “a Question 3: What is the total number of adult males in the colony (excluding the children)? (a) 3496 (b) 3490 (c) 3500 (d) 3504 (e) None. Question 4: What is the total number of females in the colony?

This is a multi-response variable (you can give more than one response). Unfortunately it violates a critical assumption in statistical analysis, the independence of responses. Online Data Analysis Test Questions Practice and Preparation Tests cover Data Analysis Test 1, Data Sufficiency, Statistics Test - 1, Data Analysis - SAT Book Test For full functionality of this site it is necessary to enable JavaScript.

4 effective experiment Design and Data analysis in transportation research implies is to identify and address related questions. Related questions help to define the breadth of the experiment. â ¢ Does the product have to be better in all situations? The research question â Is crack sealant A better than crack sealant B?â implies that one product or treatment will be selected over another Econometric Analysis of Panel Data Spring 2007 – Tuesday, Thursday: 1:00 – 2:20 Professor William Greene Midterm Examination This examination has four parts. Weights applied to the four parts will be 15, 15, 30 and 40. This is an open book exam. You may use any source of information that you have with you. You may not phone or text message or email or Bluetooth (is that a verb?) to “a

Online Data Analysis Sample Questions Practice and Preparation Tests cover Data Analysis Test 1, NTSE/CBSE (Science) - 16, NTSE/CBSE (Science) - 15, Science (Class - For full functionality of this site it is necessary to enable JavaScript. This article expands on this central question by offering 10 additional key questions that can help to provide an even more complete story when analyzing data. The table in figure 1 summaries these 10 questions with an interpretation for each.

Data preparation is perhaps the most important step in any type of serious data analysis. And while it would be ludicrous to attempt to cover such a broad field of knowledge in one article, we’ve prepared a quick checklist that you can run through when preparing data for analysis. This is a multi-response variable (you can give more than one response). Unfortunately it violates a critical assumption in statistical analysis, the independence of responses.

You can start your answer with something fundamental, such as "big data analysis involves the collection and organization of data, and the ability to discover correlations between the data that Final Exam Practice Questions Categorical Data Analysis 1. The estimated regression coefficients and associated standard errors for a multiple logistic regression model are provided in Table 1 using data from a prospective

20 Most Popular Data Science Interview Questions article Answer: Root cause analysis was initially developed to analyze industrial accidents but is now widely used in other areas. It is a problem-solving technique used for isolating the root causes of faults or problems. A factor is called a root cause if its deduction from the problem-fault-sequence averts the final undesirable event from select the appropriate data for answer-ing a question. 2. Get a general picture of the information before reading the question. Read the given titles carefully and try to under-stand its nature. 3. Avoid lengthy calculations generally, data interpretation questions do not require to do extensive calculations and computa- tions. Most questions simply require read-ing the data correctly and

Free Online data analysis sample questions Practice and

Final Exam Practice Questions Categorical Data Analysis. This is the generic data analysis process that we have explained in this answer, however, the answer to your question might slightly change based on the kind of data problem and the tools available at …, We are offering the R interview questions and answers to help you perform better in your R job interview by listing the most probable questions asked. This interview questions section includes topics on how to communicate data analysis results using R, difference between library and require functions, function for adding datasets, R data.

Data Analysis And Interpretation Questions And Answers PDF

6 Questions to Ask When Preparing Data for Analysis Sisense. 22/09/2017В В· Interview for Data Analysis.What is data analysis?What you know about interquartile range as data analyst?Do you know what is data analysis?What is long-term outcome in data analysis?What is, Data Analysis Interview Questions And Answers Pdf TABLEAU INTERVIEW QUESTIONS & ANSWERS PDF / DATA ANALYST/TABLEAU DESKTOP : SET 1. SET-1 (TOP 40 TABLEAU INTERVIEW QUESTIONS)..

Data Analysis Interview Questions And Answers Pdf TABLEAU INTERVIEW QUESTIONS & ANSWERS PDF / DATA ANALYST/TABLEAU DESKTOP : SET 1. SET-1 (TOP 40 TABLEAU INTERVIEW QUESTIONS). Data analysis is the process of systematically applying statistical and/or logical techniques to describe and illustrate, condense and recap and evaluate data.

This article expands on this central question by offering 10 additional key questions that can help to provide an even more complete story when analyzing data. The table in figure 1 summaries these 10 questions with an interpretation for each. Online Data Analysis Test Questions Practice and Preparation Tests cover Data Analysis Test 1, Data Sufficiency, Statistics Test - 1, Data Analysis - SAT Book Test For full functionality of this site it is necessary to enable JavaScript.

Online Data Analysis Sample Questions Practice and Preparation Tests cover Data Analysis Test 1, NTSE/CBSE (Science) - 16, NTSE/CBSE (Science) - 15, Science (Class - For full functionality of this site it is necessary to enable JavaScript. Data Analysis Interview Questions And Answers Pdf TABLEAU INTERVIEW QUESTIONS & ANSWERS PDF / DATA ANALYST/TABLEAU DESKTOP : SET 1. SET-1 (TOP 40 TABLEAU INTERVIEW QUESTIONS).

Online Data Analysis Test Questions Practice and Preparation Tests cover Data Analysis Test 1, Data Sufficiency, Statistics Test - 1, Data Analysis - SAT Book Test For full functionality of this site it is necessary to enable JavaScript. Data Analysis Questions And Answers Pdf using methodical strategies to uncover answers in your data. important to have a specific question in mind when you begin data analysis so.

Students are required to respond to multiple-choice questions covering the Data analysis core area of study and three selected modules from the Applications area of study in relation to Outcomes 1 and 3. Structure and format The examination will consist of multiple-choice questions on the core and each of the six application modules. Students will be required to answer all questions on the 4 effective experiment Design and Data analysis in transportation research implies is to identify and address related questions. Related questions help to define the breadth of the experiment. Гў Вў Does the product have to be better in all situations? The research question Гў Is crack sealant A better than crack sealant B?Гў implies that one product or treatment will be selected over another

Data analysis is the process of systematically applying statistical and/or logical techniques to describe and illustrate, condense and recap and evaluate data. 21/06/2017В В· 8 Tips for Asking The Right Data Analysis Questions Here at datapine we have helped solve hundreds of data analysis problems for our clients. All of our experience has taught us that data analysis is only as good as the questions you ask.

select the appropriate data for answer-ing a question. 2. Get a general picture of the information before reading the question. Read the given titles carefully and try to under-stand its nature. 3. Avoid lengthy calculations generally, data interpretation questions do not require to do extensive calculations and computa- tions. Most questions simply require read-ing the data correctly and Online Data Analysis Sample Questions Practice and Preparation Tests cover Data Analysis Test 1, NTSE/CBSE (Science) - 16, NTSE/CBSE (Science) - 15, Science (Class - For full functionality of this site it is necessary to enable JavaScript.

Question 3: What is the total number of adult males in the colony (excluding the children)? (a) 3496 (b) 3490 (c) 3500 (d) 3504 (e) None. Question 4: What is the total number of females in the colony? In a structured survey with numbered questions, the flat file has a column for each question, and a row for each respondent, a convention common to almost all standard statistical packages. If the data form a perfect rectangular grid with a number in every cell, analysis is made relatively easy, but there are many reasons why this will not always be the case and flat file data will be

This article expands on this central question by offering 10 additional key questions that can help to provide an even more complete story when analyzing data. The table in figure 1 summaries these 10 questions with an interpretation for each. You can start your answer with something fundamental, such as "big data analysis involves the collection and organization of data, and the ability to discover correlations between the data that

tween questions, answers and statistics seems to me to be something which us keep in mind the fact that the latter involves an analysis or a statistical model, and that there may be as many answers to this question as there are analyses or models? Surely much of the blame for such thinking rests with us, the teachers of statistics, who never fail to popularize the rigid formalism of Neyman You can start your answer with something fundamental, such as "big data analysis involves the collection and organization of data, and the ability to discover correlations between the data that

Data Analysis Model and Process Guiding Questions

Data Analysis Interview Questions And Answers YouTube. 4 effective experiment Design and Data analysis in transportation research implies is to identify and address related questions. Related questions help to define the breadth of the experiment. Гў Вў Does the product have to be better in all situations? The research question Гў Is crack sealant A better than crack sealant B?Гў implies that one product or treatment will be selected over another, This article expands on this central question by offering 10 additional key questions that can help to provide an even more complete story when analyzing data. The table in figure 1 summaries these 10 questions with an interpretation for each..

Data Analysis—10 Key Questions and Reasons Quality Digest. Of these 24 questions,16 will be on data analysis and 8 will be on recursion and financial modelling. All questions will be compulsory. Section A will be worth a total of 24 marks. Section B. will consist of eight multiple-choice questions on each of the four modules in Unit 4. Students must answer questions on . two. modules. Section B will be worth a total of 16 marks. The total marks for, select the appropriate data for answer-ing a question. 2. Get a general picture of the information before reading the question. Read the given titles carefully and try to under-stand its nature. 3. Avoid lengthy calculations generally, data interpretation questions do not require to do extensive calculations and computa- tions. Most questions simply require read-ing the data correctly and.

Free Online Data Analysis Test Questions Practice and

Effective Experiment Design and Data Analysis in. Data Analysis Model and Process: Guiding Questions Data Coaching Services Page 1 of 1 Last updated January 25, 2012 Frame the Question Organize for Dialogue Collect the Data Analyze the Data Interpret the Data Select Actions Monitor Results tween questions, answers and statistics seems to me to be something which us keep in mind the fact that the latter involves an analysis or a statistical model, and that there may be as many answers to this question as there are analyses or models? Surely much of the blame for such thinking rests with us, the teachers of statistics, who never fail to popularize the rigid formalism of Neyman.

Statistical data analysis MCQs, statistical data analysis quiz answers, learn data analytics online courses. Statistical data analysis multiple choice questions and answers pdf on types of statistical methods, statistical analysis methods, data types in stats, sources of data, statistical techniques for online histogram courses distance learning. This is a multi-response variable (you can give more than one response). Unfortunately it violates a critical assumption in statistical analysis, the independence of responses.

Online Data Analysis Test Questions Practice and Preparation Tests cover Data Analysis Test 1, Data Sufficiency, Statistics Test - 1, Data Analysis - SAT Book Test For full functionality of this site it is necessary to enable JavaScript. You can start your answer with something fundamental, such as "big data analysis involves the collection and organization of data, and the ability to discover correlations between the data that

To answer these questions, we analysewhat participants report about their participation in liberal adult d education courses, about their experiences in liberal adult education courses and about the impact that participation has on their lives. We want to know how participation in liberal adult education affects and changes participants’ attitudes, self-concepts, learning biographies, and After clicking the below "Download GIS Analysis Interview Questions" button you must have to stay for a couple of seconds to process and complete your request. Content of …

22/09/2017В В· Interview for Data Analysis.What is data analysis?What you know about interquartile range as data analyst?Do you know what is data analysis?What is long-term outcome in data analysis?What is 100 High Level Data Interpretation Questions & Answers PDF. Welcome to the www.letsstudytogether.co online learning section. As we know now SBI/IBPS has conducted its mains examination keeping the name of the Quantitative Aptitude Section as Data Analysis & Interpretation.

This is a multi-response variable (you can give more than one response). Unfortunately it violates a critical assumption in statistical analysis, the independence of responses. This is a multi-response variable (you can give more than one response). Unfortunately it violates a critical assumption in statistical analysis, the independence of responses.

tween questions, answers and statistics seems to me to be something which us keep in mind the fact that the latter involves an analysis or a statistical model, and that there may be as many answers to this question as there are analyses or models? Surely much of the blame for such thinking rests with us, the teachers of statistics, who never fail to popularize the rigid formalism of Neyman Statistical data analysis MCQs, statistical data analysis quiz answers, learn data analytics online courses. Statistical data analysis multiple choice questions and answers pdf on types of statistical methods, statistical analysis methods, data types in stats, sources of data, statistical techniques for online histogram courses distance learning.

You can start your answer with something fundamental, such as "big data analysis involves the collection and organization of data, and the ability to discover correlations between the data that This is the generic data analysis process that we have explained in this answer, however, the answer to your question might slightly change based on the kind of data problem and the tools available at …

Data Analysis And Interpretation Questions And Answers Data interpretation questions and answers aptitude, data interpretation questions and answers with explanation for interview, competitive examination and entrance Data Analysis Interview Questions And Answers Pdf TABLEAU INTERVIEW QUESTIONS & ANSWERS PDF / DATA ANALYST/TABLEAU DESKTOP : SET 1. SET-1 (TOP 40 TABLEAU INTERVIEW QUESTIONS).

You can start your answer with something fundamental, such as "big data analysis involves the collection and organization of data, and the ability to discover correlations between the data that This article expands on this central question by offering 10 additional key questions that can help to provide an even more complete story when analyzing data. The table in figure 1 summaries these 10 questions with an interpretation for each.

Data Analysis Questions And Answers Pdf using methodical strategies to uncover answers in your data. important to have a specific question in mind when you begin data analysis so. Question 3: What is the total number of adult males in the colony (excluding the children)? (a) 3496 (b) 3490 (c) 3500 (d) 3504 (e) None. Question 4: What is the total number of females in the colony?

100 High Level Data Interpretation Questions & Answers PDF. Welcome to the www.letsstudytogether.co online learning section. As we know now SBI/IBPS has conducted its mains examination keeping the name of the Quantitative Aptitude Section as Data Analysis & Interpretation. and thus alternative ways of seeing, finding answers to questions one wishes to answer. Implicated in the preceding views of Antonius (2003:2) and Schostak and Schostak (2008:10) are the two methods used to analyse data, namely qualitative and quantitative.